Researchers create humanoid robot so advanced it can predict when you are going to smile

- Researchers at Columbia University have developed a robotic head called Emo

- He’s been trained to read non-verbal cues, like eye contact and smiling, to improve robot-human interactions

- Emo is so good at reading subtle changes in a human face that he can anticipate when a human is about to smile and respond before they do

Published on Apr 09, 2024 at 8:13 PM (UTC+4)

by Andie Reeves

Last updated on Apr 10, 2024 at 3:11 PM (UTC+4)

Edited by

Amelia Jean Hershman-Jones

Columbia University has developed a robot called Emo which specializes in facial expressions.

He’s been designed to bridge the gap between humans and robots through non-verbal communication like smiling.

Emo is currently just a head, but the team plans to incorporate language model systems.

Ultimately they hope this project will help humans and robots have better and more meaningful interactions.

READ MORE! Yamaha unveiled self-driving motorbike that can recognize its owner from a distance

Thanks to models like ChatGPT, the communication skills of AI are at an all-time high.

There’s the humanoid robot who can perform an uncanny impression of Elon Musk, and Ameca the robot who had an exclusive chat with Supercar Blondie, recently.

But there is a huge element of human-robot interaction that is largely still lacking: non-verbal communication.

Researchers at Columbia University are on a mission to change that, building trust between humans and robots one smile at a time.

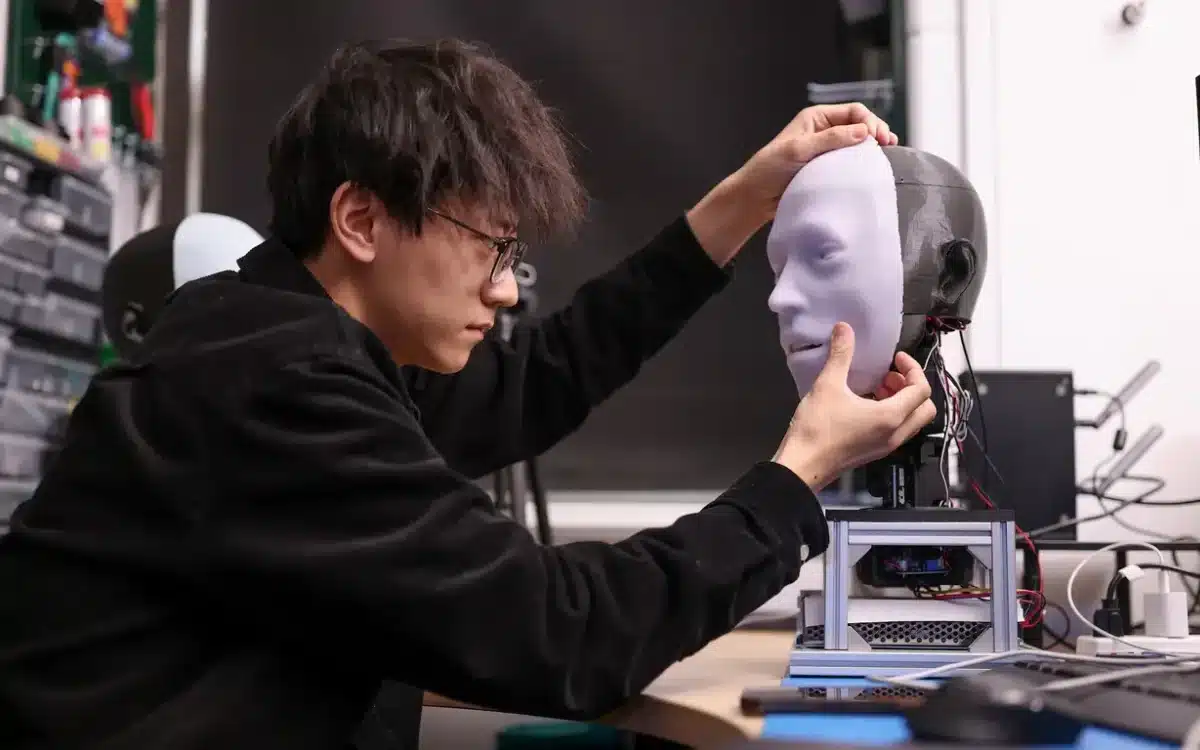

They’ve created a robotic human head complete with eyes and skin, and called it ‘Emo’.

Emo has 26 separate actuators, which allow him to express the micromovements required to produce realistic facial expressions.

He is also equipped with high-resolution cameras embedded in his eyes and a layer of silicone ‘skin’ that conceals his mechanical components.

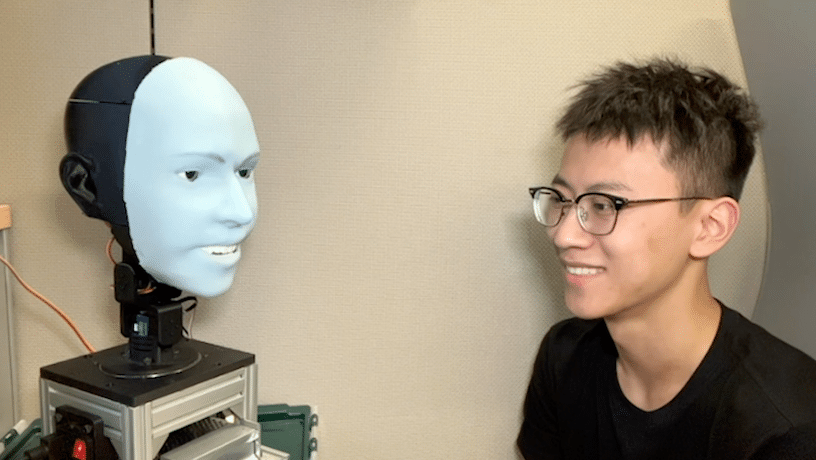

The eyes help him to maintain eye contact during conversation, a crucial part of human interaction.

The team had two challenges: designing a robot that could both produce and read a wide range of facial expressions.

Led by Yuhang Hu, a Columbia Engineering PhD student, they built two AI models to work together.

One is to read a human’s face and predict what expression they’re about to make by analyzing subtle changes in their face.

And the other to immediately issue motor responses to Emo’s face replicating or responding to the facial expression.

First, they placed Emo in front of a mirror and instructed him to spend hours practicing various facial expressions.

Next, Emo studied facial expressions in humans by studying videos frame by frame.

Emo assimilated this information so effectively that he can now anticipate when a human is about to smile and respond before they do.

Hu believes that this will improve the interaction quality between robots and humans.

“Traditionally robots have not been designed to consider humans’ facial expressions during interactions,” Hu says.

“Now the robot can integrate human facial expressions as feedback.”

Going forward, the team will be integrating a language model system like ChatGPT to take Emo’s conversation skills to the next level.

For now Emo only operates in smiles while the team figures out how to incorporate more tricky expressions like pouting or frowning.

DISCOVER SBX CARS: The global premium car auction platform powered by Supercar Blondie

Andie is a content writer from South Africa with a background in broadcasting and journalism. Starting her career in the glossy pages of Cosmopolitan and Marie Claire, Andie has a broad portfolio, covering everything from sustainability solutions to celebrity car collections. When not at her laptop Andie can be found sewing, recording her podcast, taking board games too seriously or road-tripping in her bright green Kia.